Threat and Vulnerability Management

June 20, 2013 2 Comments

Threat and Vulnerability Management

The best way to ensure a fighting chance of discovering and defeating information exploitation and theft is to take a disciplined, programmatic approach to discovering and mitigating threats and vulnerabilities. Threat and Vulnerability Management is the cyclical practice of identifying, assessing, classifying, remediating, and mitigating security weaknesses together with fully understanding root cause analysis to address potential flaws in policy, process and, standards – such as configuration standards.

Vulnerability assessment and management is an essential piece for managing overall IT risk, because:

- Persistent Threats –

- Attacks exploiting security vulnerabilities for financial gain and criminal agendas continue to dominate headlines.

- Regulation –

- Many government and industry regulations, such as HIPAA-HITECH, PCI DSS V2 and Sarbanes-Oxley (SOX), mandate rigorous vulnerability management practices

- Risk Management –

- Mature organizations treat it as a key risk management component.

- Organizations that follow mature IT security principles understand the importance of risk management.

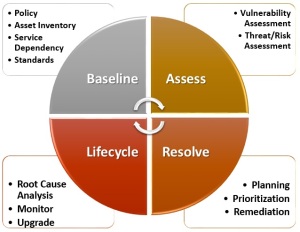

Properly planned and implemented threat and vulnerability management programs represent a key element in an organization’s information security program, providing an approach to risk and threat mitigation that is proactive and business-aligned, not just reactive and technology-focused. Threat and vulnerability management programs include the following 4 major elements:

- Baseline

- Assess

- Remediate

- Lifecycle Management

Each of these elements individually benefits the organization in many ways, but together they form interlocking parts of an integrated, effective threat and vulnerability management program.

Baseline

Policy

The threat and vulnerability management life cycle begins with the definition of policies, standards and specifications that define access restrictions, and includes configuration settings that harden the IT infrastructure against external or internal threats. Security configuration policies and specifications should be based on industry-recognized best practices such as the Center for Internet Security (CIS) benchmarks or National Institute of Standards and Technology (NIST) recommendations.

The development of security configuration policies and specifications is an iterative process that starts with industry standards and best practices as a desired state. However, many organizations may also need to define exceptions in order to accommodate specific applications or administrative processes within their environment and track them for resolution.

Organizations should also consider a mapping of organization-specific configuration policies and operational processes to industry-recognized control frameworks and best practices. Organizations that take the extra step of mapping the policies that are implemented by vulnerability management to control standards and best practices can strengthen their posture with auditors and reduce the cost of compliance reporting through automation. The mapping enables compliance reporting from configuration assessments.

Asset Inventory

To protect information, it is essential to know where it resides. The asset inventory must include the physical and logical elements of the information infrastructure. It should include the location, business processes, data classification, and identified threats and risks for each element.

This inventory should also include the key criteria of the information that needs to be protected, such as the type of information being inventoried, classification for the information and any other critical data points the organization has identified. From this baseline inventory pertinent applications and systems can be identified to iteratively develop and update an Application Security Profile Catalog. It is important to begin to understand application roles and relationships (data flows, interfaces) for threat and risk analysis since a set of applications may provide a service or business function. This will be discussed in more detail in a future blog.

Service Dependency Mapping

Classification of assets according to the business processes that they support is a crucial element of the risk assessment that is used to prioritize remediation activities. Assets should be classified based on the applications they support, the data that is stored and their role in delivering crucial business services. The resource mapping and configuration management initiatives within the IT operations areas can begin to provide the IT resource and business process linkage that is needed for security risk assessment.

IT operational areas need service dependency maps for change impact analysis, to evaluate the business impact of an outage, and to implement and manage SLAs with business context. IT operations owns and maintains the asset groupings and asset repositories needed to support service dependency mappings.

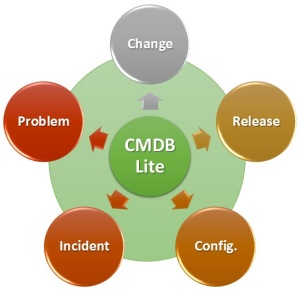

This information is typically stored in an enterprise directory, asset management system or a CMDB. Further details will be provided in the forthcoming Application Architecture Taxonomy blog.

The security resource needs the same information in order to include business context in the risk assessment of vulnerabilities, to prioritize security incidents, to publish security metrics with business context and to publish compliance reports that are focused on the assets that are in scope for specific regulations.

Security resources should engage IT application operations areas to determine the sources for IT service dependency maps and should configure security assessment functions to dynamically access or import this data for risk analysis, security monitoring and compliance reporting functions. The security team should also participate in CMDB projects as a stakeholder and supporter.

Configuration Standards by Device Role

A vulnerability management program focusing only on vulnerability assessment is weak regarding a crucial vulnerability management program objective — making the environment more secure. Although vulnerability assessment excels at discovering security weaknesses, its reporting isn’t optimized for the mitigation work performed by operations areas. Chasing individual vulnerabilities often does not eliminate the root cause of the problem. A large percentage of vulnerabilities results from underlying configuration issues (missing patches, ports that shouldn’t be open or services that shouldn’t be running).

The security resource should work with IT operations to define security configuration standards, and should use the security configuration assessment capability within their incumbent vulnerability assessment tool (if the vulnerability assessment tool provides it) to drive implementation of security configuration standards in desktop, network and server provisioning processes.

Threat and Vulnerability Analysis

To perform threat analysis effectively, it is important to employ a consistent methodology that examines the business and technical threats to an application or service. Adversaries use a combination of skills and techniques to exploit and compromise a business process or application, so it is necessary to have in place a similarly multipronged approach to defend against them that decomposes and analyzes systems.

Vulnerability Assessment

The next step is to assess the environment for known vulnerabilities, and to assess IT components using the security configuration policies (by device role) that have been defined for the environment. This is accomplished through scheduled vulnerability and configuration assessments of the environment.

Network-based vulnerability assessment (VA) has been the primary method employed to baseline networks, servers and hosts. The primary strength of VA is breadth of coverage. Thorough and accurate vulnerability assessments can be accomplished for managed systems via credentialed access. Unmanaged systems can be discovered and a basic assessment can be completed. The ability to evaluate databases and Web applications for security weaknesses is crucial, considering the rise of attacks that target these components.

Database scanners check database configuration and properties to verify whether they comply with database security best practices.

Web application scanners test an application’s logic for “abuse” cases that can break or exploit the application. Additional tools can be leveraged to perform more in-depth testing and analysis.

All three scanning technologies (network, application and database) assess a different class of security weaknesses, and most organizations need to implement all three.

Risk Assessment

Larger issues should be expressed in the language of risk (e.g., ISO 27005), specifically expressing impact in terms of business impact. The business case for any remedial action should incorporate considerations relating to the reduction of risk and compliance with policy. This incorporates the basis of the action to be agreed on between the relevant line of business and the security team

“Fixing” the issue may involve acceptance of the risk, shifting of the risk to another party or reducing the risk by applying remedial action, which could be anything from a configuration change to implementing a new infrastructure (e.g., data loss prevention, firewalls, host intrusion prevention software).

Elimination of the root cause of security weaknesses may require changes to user administration and system provisioning processes. Many processes and often several teams may come into play (e.g., configuration management, change management, patch management). Monitoring and incident management processes are also required to maintain the environment.

For more details on threat and risk assessment best-practices see the blogs: Risk-Aware Security Architecture as well as Risk Assessment and Roadmap.

Vulnerability Enumeration

CVE – Common Vulnerabilities and Exposures

Common Vulnerabilities and Exposures (CVE®) is a dictionary of common names (i.e., CVE Identifiers) for publicly known information security vulnerabilities. CVE’s common identifiers make it easier to share data across separate network security databases and tools, and provide a baseline for evaluating the coverage of an organization’s security tools. If a report from one of your security tools incorporates CVE Identifiers, you may then quickly and accurately access fix information in one or more separate CVE-compatible databases to remediate the problem.

CVSS – Common Vulnerability Scoring System

The Common Vulnerability Scoring System (CVSS) provides an open framework for communicating the characteristics and impacts of IT vulnerabilities. Its quantitative model ensures repeatable accurate measurement while enabling users to see the underlying vulnerability characteristics that were used to generate the scores. Thus, CVSS is well suited as a standard measurement system for industries, organizations, and governments that need accurate and consistent vulnerability impact scores.

CWE – Common Weakness Enumeration

The Common Weakness Enumeration Specification (CWE) provides a common language of discourse for discussing, finding and dealing with the causes of software security vulnerabilities as they are found in code, design, or system architecture. Each individual CWE represents a single vulnerability type. CWEs are used as a classification mechanism that differentiates CVEs by the type of vulnerability they represent. For more details see: Common Weakness Enumeration.

Remediation Planning

Prioritization

Vulnerability and security configuration assessments typically generate very long remediation work lists, and this remediation work needs to be prioritized. When organizations initially implement vulnerability assessment and security configuration baselines, they typically discover that a large number of systems contain multiple vulnerabilities and security configuration errors. There is typically more mitigation work to do than the resources available to accomplish it.

The organization should implement a process to prioritize the mitigation of vulnerabilities discovered through vulnerability assessments and security configuration audits, and to prioritize the responses to security events. The prioritization should be based on an assessment of risk to the business. Four variables should be evaluated when prioritizing remediation and mitigation activities:

- Exploit Impact – the nature of the vulnerability and the level of access achieved.

- Exploit Likelihood – the likelihood that the vulnerability will be exploited.

- Mitigating Controls – the ability to shield the vulnerable asset from the exploit.

- Asset Criticality – the business use of the application or data that is associated with the vulnerable infrastructure or application.

Remediation

Security is improved only when mitigation activity is executed as a result of the baseline and monitoring functions. Remediation is facilitated through cross-organizational processes and workflow (trouble tickets). Although the vulnerability management process is security-focused, the majority of mitigation activities are carried out by the organization’s IT operations areas as part of the configuration and change management processes.

Separation of duties dictate that security teams should be responsible for policy development and assessment of the environment, but should not be responsible for resolving the vulnerable or noncompliant conditions. Information sharing between security and operations teams is crucial to properly use baseline and monitoring information to drive remediation activities.

For more details on remediation planning and execution see complementary blog: Vulnerability Assessment Remediation

Vulnerability Lifecycle Management

Vulnerability management uses the input from the threat and vulnerability analysis to mitigate the risk that has been posed by the identified threats and vulnerabilities. A vulnerability management program consists of a continuous process, a lifecycle as follows:

Monitor Baseline

While a threat and vulnerability management program can make an IT environment less susceptible to an attack, assessment and mitigation cannot completely protect the environment. It is not possible to immediately patch every system or eliminate every application weakness. Even if this were possible, users would still do things that allowed malicious code on systems.

In addition, zero-day attacks can occur without warning. Since perfect defenses are not practical or achievable, organizations should augment vulnerability management and shielding with more-effective monitoring. Targeted attacks take time to execute, and the longer a breach goes unnoticed, the greater the damage. Better monitoring is needed to detect targeted attacks in the early stages, before the final goals of the attack are achieved. Use security information and event management (SIEM) technologies or services to monitor, correlate and analyze activity across a wide range of systems and applications for conditions that might be early indicators of a security breach.

Root Cause Analysis

It is important to analyze security and vulnerability assessments in order to determine the root cause. In many cases, the root cause of a set of vulnerabilities lies within the provisioning, administration and maintenance processes of IT operations or within their development or the procurement processes of applications. Elimination of the root cause of security weaknesses may require changes to user administration and system provisioning processes.

Conclusion

In 2012, less than half of all vulnerabilities were easily exploitable, down from approximately 95 percent in 2000. In addition, fewer high severity flaws were found. The number of vulnerabilities with a score on the Common Vulnerability Scoring System (CVSS) of 7.0 or higher dropped to 34 percent of reported issues in 2012, down from a high of 51 percent in 2008.

Unfortunately, there are more than enough highly critical flaws to go around. In 2012, more than 9 percent of the publicly reported vulnerabilities had both a CVSS score of 9.9 and a low attack complexity, according to NSS Labs. Vulnerabilities disclosed in 2012 affected over 2,600 products from 1,330 vendors. New vendors who had not had a vulnerability disclosure accounted for 30% of the total vulnerabilities disclosed in 2012. While recurring vendors may still represent the bulk of vulnerabilities reported, research shows that the vulnerability and threat landscape continues to be highly dynamic.

Thanks for your interest!

Nige the Security Guy.